February 1, 2024 : Issue #60

WONDERCABINET : Lawrence Weschler’s Fortnightly Compendium of the Miscellaneous Diverse

WELCOME

This week, further thoughts on Gaza (drawing on passages from Barghouti, Klein, Dayan, and Oz), followed by a look back at the state of the digital animation of the face, as of 2002, as opposed to the current state of same with regard to hands.

***

FURTHER NOTES ON GAZA

This just in, from this morning’s news conference with Secretary of Defense Lloyd Austin:

Asked whether Iran should be held responsible for the recent drone attack on US forces in Jordan, Austin replied (at 33:40 here):

These are Iranian proxy groups, and how much Iran did or didn’t know, we don’t know, but it really doesn’t matter because Iran sponsors these groups, it funds these groups, in some cases it trains these groups on advanced conventional weapons, and without that facilitation, these kinds of things don’t happen.

Alas, no one in the press corps was allowed to frame the obvious follow-up as to whether exactly the same sort of analysis ought to apply to the question of the Biden administration’s responsibility, and that of America generally, for the current activities of Israeli forces in Gaza.

Speaking of which, a great catch from AdamTooze’s Chartbooks Top Links Substack a few days back, highlighting the independent Palestinian activist Mustafa Barghouti’s spot-on response to an exasperating German interviewer:

Meanwhile, many of you will likely already have read Ezra Klein’s exceptionally lucid piece in the NY Times last weekend, but I urge those of you who haven’t to at least give it a look. It goes a long way toward laying out and then accounting for the ever-widening generational gap in attitudes toward Israel and Palestine among American Jews and for that matter Americans generally, the way in which for many if not most observers of Biden’s generation Israel is still the vital, necessary, idealistic, endlessly threatened project of their youth (and hence still and forever worthy of loyalty on that basis), whereas for young people today, Israel is the actually existing Israel, the only Israel they have ever known, which is to say the pinched and peremptory Israel of Netanyahu, which is something else altogether. The older generation still reassures itself with fantasies of an ever-receding two-state solution, while the younger one gazes grimly at the reality, the appalling facts strewn all about the ground.

Klein’s piece put me in mind of the startling passage I’d cited a few weeks back in these pages from the eulogy that Moshe Dayan (a key transitional figure in Klein’s generational formulation) had voiced back in 1956 on the occasion of the memorial service for Roi Rutenberg, a security officer of a kibbutz close to the Palestinian enclave who’d been killed by infiltrators from Gaza: how one might well pause before blaming the murderers since “For eight years now, they have sat in the refugee camps in Gaza, and before their eyes we have turned their lands and villages, where they and their fathers dwelt, into our home.”

I was recalling that line in a recent conversation with my friend the Israeli-America filmmaker Shimon Dotan (the man behind two of the most trenchant documentaries on the ongoing Middle Eastern mire from recent years, his 2006 Hot House on the steady incubation of a Palestinian future in the bowels of Israeli military prisons and the contrapuntal Settlers, from 2016, on the steady foreclosure of any such possibility by radical Zionist religious activists on the West Bank). At least back in 1956, I hazarded, when even someone like Moshe Dayan seemed to acknowledge the legitimacy of Palestinian grievances, there might have been hope for another way. But you might want to read the entirety of that eulogy, Shimon cautioned me, and indeed that evening he emailed me the full transcript (which you too can read in its entirety here). The gist of things, though, is that while Dayan indeed acknowledged the justifiable sense of grievance rising up among Palestinians, he then immediately went on to draw a diametrically opposite conclusion from the one some of us might have imagined (or at any rate hoped for):

We are a generation that settles the land and without the steel helmet and the canon's maw, we will not be able to plant a tree and build a home. Let us not be deterred from seeing the loathing that is inflaming and filling the lives of the hundreds of thousands of Arabs who live around us. Let us not avert our eyes lest our arms weaken.

This is the fate of our generation. This is our life's choice—to be prepared and armed, strong and determined, lest the sword be stricken from our fist and our lives cut down.

Which, yeah, sounded more like the Moshe Dayan I’d originally recalled: the insight that the Gazans had every right to despise what the Israelis had done to them giving rise not so much to empathy for their situation or the resolve to move toward a fairer accommodation as to the imperative that the Israelis must armor themselves all the more relentlessly against the possibility of any future uprising. That of course being the Moshe Dayan who eleven years on, as Defense Minister, would lead the Israelis clean through to their stunning victory in the Six Day War with its thrilling occupation of all of Jerusalem and the entirety of Gaza and the West Bank,

a victory in which the budding 28-year-old Israeli novelist Amos Oz had taken part as a reservist in a tank unit that had sliced clear through the Sinai. Though as early as August of that same year, writing in Davar, the newsletter of “the workers of the Land of Israel,” Oz, for his part, was already expressing profound reservations as to the lightning victory’s outcome, and especially his commander’s characterization of that outcome.

Years later, shortly after Oz’s death at age 79 in 2018, Mitch Ginsburg, a reporter for the Times of Israel managed to dig up a microfilm of that August 22, 1967 issue of the long-defunct newsletter in question at the National Library and marveled at its prescience. Oz had begun by citing a recent public address in which Dayan had justified his insistence that Israel should retain control of the conquered territories in terms of a single phrase, the country’s need for more “lebensraum.”

I don’t know {Oz went on} how Moshe Dayan’s voice did not tremble while employing that phrase, with all the harrowing memories it raises. Living space means one thing: disenfranchising the foreigner, the inferior ‘savage’ and making place for the superior and the civilized—the powerful.

But it’s not for that that we fought. Israel’s living space is entirely before it: the wastelands of the Galilee and the Negev. We have no living space in the West Bank of the Jordan, because it is already peopled by a nation living on its land, even though it is currently a nation routed in battle. The expression ‘living space’ defiles our war. Our enemies were seemingly correct when they suspected… that behind the peace declarations upon our tongues lurked a desire for expansion and annexation…

The national mood at the time, Oz readily admitted, was leaning decidedly more in the direction of Dayan than to his own. Still, “The shorter the occupation, the better for us,” Oz continued.

Because [any] imposed occupation is destructive, and even an enlightened and humane and liberal occupation is an occupation. I fear for the quality of the seeds we will be sowing in the near future in the hearts of the occupied. More than that, I fear for the seed that is being sown in the heart of the occupiers. And the first signs are already recognizable. {…}

“I fear,” Oz concluded that 1967 article, “that we are encircled by the thrill of victory—the very thrill that gnawed at the roots of other great nations until it flipped the wheel upon them.”

A few weeks ago, Jon Schwartz writing in The Intercept (Jan 20, 2024), quoted a later passage of Amos Oz’s (indeed from closer to his passing) in which he’d contended that

Tragedies can be resolved in one of two ways: there is the Shakespearean resolution and there is the Chekhovian one. At the end of a Shakespearean tragedy, the stage is strewn with dead bodies and maybe there’s some justice hovering high above. A Chekhov tragedy, on the other hand, ends with everybody disillusioned, embittered, heartbroken, disappointed, absolutely shattered, but still alive. And I want a Chekhovian resolution, not a Shakespearean one, for the Israeli-Palestinian tragedy.

As should we all, perhaps, as should we all.

***

INDEX SPLENDORUM

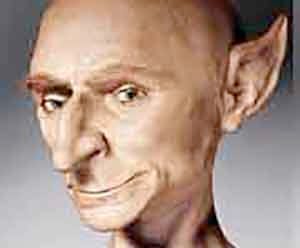

ILM’s mini-meta short “Hugo” (2000)

Back in 2000, the digital wizards at George Lucas’s Industrial Light & Magic, while pushing the boundaries of what was then possible when it came to the digital animation of the face, came up with this remarkable 18-second experimental in-house short.

But thanks to the generous and much-appreciated efforts of the good folks at the ILM archives, we can all now view “Hugo” here.

* * *

From The Archives

Over twenty years ago, the editors at Wired asked me to survey recent developments in ongoing efforts to digitally model bodies across computer game platforms and animated features. The piece that resulted, published in the June 2002 issue of the magazine, zeroed in on the incredibly complicated challenge of doing so in particular with regard to faces (referencing, for example the ILM Hugo effort sampled in the SPLENDORUM above) and ended up wondering whether it would ever be possible, even in principle, to surmount the so-called “uncanny valley” effect.

That question is once again very much in the news what with the cascading series of recent breakthroughs arising from various Artificial Intelligence natural learning regimes which mine humongous data sets in their efforts to crack the problem, with varying degrees of success. So it seemed like it might be a good time to revisit that 2002 article of mine (which also effectively proved the title piece in my subsequent 2011 Uncanny Valley collection) since many of the same issues pertain (while many others no longer seem to).

On the Digital Animation of the Face (2002)

Wired, June 2022: “Why is This Man Smiling?”

Faces hit walls all the time in the movies, but this was different: The wall these digital filmmakers kept running up against was the face itself. Bodies, animators insist, are quite doable—no longer that big a deal. Hands are a bit more of a challenge, yet easily within reach. But the face!

The face is the one area where muscles don't necessarily attach to bone: Often, muscles fold one atop the other, one into the other. Moreover, these 44 facial muscles are capable of producing some 5,000 different expressions. So for animators, a realistic human face—in motion and, what's more, emoting—damn, that was proving tough. And some, especially given the fate of the feature film version of the celebrated video game Final Fantasy—an all-digital, $140 million box office flop—were beginning to ask themselves whether this particular wall was even theoretically scalable.

Which wasn't all that surprising to me. Naturally, I figured, as I launched out on this story, these folks can't do faces because, as everybody knows, faces (as opposed to, say, bellies or thighs) are the Seat of the Soul, and souls simply aren't quantifiable, they don't resolve themselves into so many bits – no matter how many.

Mulling over the challenges confronting animators, as I flew out to meet some of them, I recalled the formulations of the late-medieval numbers mystic, Nicholas of Cusa, who likened true knowledge of God and the Infinite to a circle within which was slotted a regular compounding n-sided polygon: A triangle, say, then a square, a pentagon, a hexagon, and so forth. Keep adding sides—a hundred, a thousand, a million—and true, Cusa conceded,

it seems like you'd be getting closer and closer to the encompassing circle. But in fact, he pointed out, you'll be getting farther and farther away, because a million-sided polygon has precisely that: a million angles, a million sides. Whereas a circle has no angles and only one side. It seemed to me that the face stalkers may have set themselves a similarly impossible challenge, because a billion-bit face, no matter how seemingly close, was destined to fall infinitely short of the simple, seamless whole that is any actual face (and any actually human way of perceiving that face).

Ultimately, I figured, theirs might simply be a Fantasy Too Far.

*

Henrik Wann Jensen spends his days and late nights thinking about milk. "Dinosaurs are easy," he assures me, "compared to milk." A research associate in the Computer Graphics Lab at Stanford, the young Dane's focus of interest is translucency, luminosity, the soft sheen of the real: milk, marble, skin. To glow or not to glow—that is the challenge. And, it turns out, soft is hard.

Light hits a surface and bounces off, Jensen explains, himself vaulting over to his blackboard to sketch out a billiardlike bank shot, and if the surface is sufficiently opaque and reflective—metal, say, or plastic or an antlike exoskeleton—the formulas and algorithms are pretty straightforward and don't require that much computer power to flesh out. Which is one reason the early breakthroughs in realistic computer animation involved plastic toys or shiny insects or leathery dinosaurs. But photons of light behave differently upon contact with flesh or marble—or milk: They don't ricochet off the surface; they penetrate, scattering about in confounding quantum fashion and emerging at entirely different points and at altogether different angles than the Newtonian billiard model might have predicted. Use the standard formulas, and skin will end up looking like plastic, marble like concrete, and a glass of milk like a column of chalk.

Jensen boots up his computer and opens a set of illustrations: "This is a glass of digital milk, illuminated by an orbiting light source, using the standard Newtonian algorithms"—and indeed, the stuff looks distinctly unpalatable: not even liquid. Jensen explains, "If you use the Diffusion Approximation technique we've been developing—which uses simple analytic expression to evaluate how light diffuses—you'll end up with something more like this." Like something straight out of an ad, that is. Got milk? indeed. {For a video demonstration of all this, see here.}

"Note the meniscus," says Jensen, pointing to the infinitesimal up-slope where the surface of the milk meets the glass. The meniscus—an effect of surface tension—has all sorts of light-scattering characteristics distinct from the rest of the milk, and all of that needs to be counted up and painstakingly crunched. "Most modelers forget about the meniscus and, as a result, something just doesn't look right." This is a typical sort of comment among animators, who marvel both at the tiniest of details and at the human capacity (nay, propensity) to notice those tiniest of details.

Milk matters, not simply as an exercise. According to Jensen, skim milk exhibits exactly the same light-scattering characteristics and color (a slight bluish gray) as the whites of a person's eyes. And the formulas that Jensen uses to infuse milk with luminosity turn out to do the same for marble and skin, as he demonstrates with a series of other renderings from his archive: a marble bust of a Greek goddess and then a close-up of a flesh-and-blood human nose (an enthralling rich red blushing deep inside the nostril's jet-black). Dispense with such subtle details, Jensen explains, and you end up with some of the less-than-satisfactory effects you get in Final Fantasy, for example.

Time and again, animators bring up that movie—far and away the most ambitious (and expensive) attempt to render realistic digital humans to date. Few want to criticize their fellow artists' efforts outright (and all marvel at particular effects and jaw-dropping breakthroughs), but the film keeps getting invoked as a mere instance along the way, and, as such, an indication of just how much territory remains to be crossed.

Two characters in the movie achieved a notably greater level of realism, and Jensen can tell you why. "It's not surprising," he says, "that one of the more convincingly rendered characters is the black man, because black skin is more conventionally reflective than white." The other more realistic character was the old guy, the chief scientist. "The Final Fantasy people make a big deal out of the wrinkledness and blotchiness of his face as being the explanation for its heightened sense of reality," Jensen notes, "but I think something else is at work. Because older actors and actresses in America go to great lengths to disguise their age with heavily caked-on makeup, which is highly reflective and hence less prone to light-scattering. We're used to old people on the screen looking like that, which is why the relative lack of light-scattering in the old scientist's face doesn't bother us."

*

Of course, the way light hits the face, no matter how complex, is nothing compared to the subtle intricacy of the way light radiates out from the inside of that face—the light, that is, of consciousness.

"Her face lit up as he entered the room." That was light of an altogether different kind —Cusan light—and it wasn't at all clear that Jensen's machines were ever going to be able to master it.

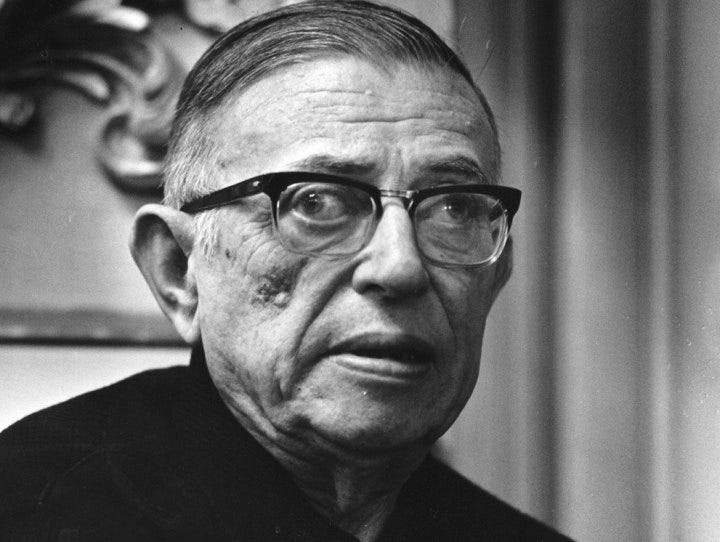

In his luminous essay, “Faces,” Jean-Paul Sartre wrote: "If I watch his eyes, I see that they are not fastened in his head, serene like agate marbles. They are being created at each moment by what they look at." Whereupon, Sartre concludes, "If we call transcendence the ability of the mind to pass beyond itself and all other things as well, to escape from itself that it may lose itself elsewhere; then to be a visible transcendence is the meaning of a face."

Being "a visible transcendence" is Sartre's way of saying (and at the same time, emphatically not saying) that we have a soul. Try animating that. And in so trying, realize, of course, that the very origin of the verb animate is the Latin word anima, or soul, such that to animate is "to ensoul"; thus it is one of the core paradoxes of the challenge these guys are facing that it is relatively easy to animate a line, the line sketch of a mouse, a digital plastic toy, a puffball monster doll—and much, much more difficult to approximate, let alone achieve, a synthetically ensouled, realistically rendered human face, the sort of face we see every day.

"But we don't have to," says Geoff Campbell, a modeler at Industrial Light & Magic, in San Rafael, California, when I broach Sartre and Cusa with him. "Our job isn't to simulate an actual human face with 100 percent fidelity. Our job is merely to fool the audience. Once you believe it, we're done."

There are hundreds—thousands—of tiny details to be gotten just right, and one of the ironies of the work is that success is gauged to the extent that they all go unnoticed. As another ILMer tells me, "We fail when the audience notices anything."

Ed Hooks, an actor and acting coach who lately has taken to coaching animators, marvels at their contradictory challenge, noting how "You're telling people, 'Be amazed, but don't notice.'"

Hooks goes on to contrast the actor's process with the animator's: "When I'm coaching an actor and he asks me, 'Should I raise my eyebrow here?' my reply is likely to be, 'I dunno. Don't think about your eyebrow. Think about the emotion you're trying to convey, and the eyebrow will take care of itself.'

"But with an animator, it's the exact opposite. You're building from the outside in. Bits don't experience emotions, and emotions get conveyed exclusively through such things as a raised eyebrow, which itself has to be precisely calibrated. Raised, okay, but by how much? And what happens to the chin when the eyebrow gets raised? To the ear? To the other ear? And so forth."

These are the kinds of details that the animator has to notice: An eye blink is more than the top lid slicing downward; as the upper lid descends, the lower lid gets pulled up and in, toward the bridge of the nose. Pupils converge toward the nose as they gaze into the distance. From a distance, one gauges where someone else is looking by a combination of the shape of the eye (that is, the way the surrounding muscles are pressing in on it) and the disposition of its white. One gauges how that person is feeling in the same way. Close-up, the really tough things to get right with the eyes are the lacrimal caruncle and the semilunar fold, which is to say that little pink nub pressed right up against the bridge of the nose, and its surrounding tissues: They move along with the pupil, as does the entire upper lid. Miss those details, and all you've got is a robotic approximation. When a mouth opens, it doesn't just open like a garage door; rather, owing to the stickiness of caked saliva, there's a sort of unzipping effect toward both corners. The way you can tell a true smile from a forced one is that, in the real one, the upper eye fold lowers slightly. (Every time! This cannot be faked!) Also, the face has to be modeled in conjunction with the body; otherwise, you can get a weird effect where the body seems to be saying one thing and the head another.

And that's not even to mention hair. Hair is a whole other article. The point is that these guys have noticed a hell of a lot so far, and they're noticing more every day. (Their polygon already has a million sides.)

*

Once the digital modelers have compiled the archive of noticed effects, they deliver it to the animator who carries out the director's commands. "Make Shrek angrier in this scene," the director says, and the animator tweaks a set of controls previously laid in by the modeler.

And now make Princess Fiona blush!

There are two principal methods for animating those expressions. The first technique—keyframe—hearkens back to the earliest traditions of animation: moving from one drawn expression to the next across a series of in-between frames, 24 per second. Only these days, the modeler tends to draw with a mouse and a keypad—and the computer itself performs a lot of the in-betweening.

At the PDI/DreamWorks shop in Palo Alto, California, Lucia Modesto, a veteran of both Shrek and Antz, offered me an eye-popping demonstration of how Shrek was animated. The PDI facial system, originally developed 13 years ago by in-house guru Dick Walsh, is based on anatomy: muscles, tissue, bones. Even in the case of a manifestly invented face like Shrek's, the underlying bone and muscle structures are first painstakingly laid in.

Along the left side of Modesto's screen runs a scroll of activating commands—more than 500 of them, arranged according to facial feature. For the right brow, for instance, there's Raised, Mad, Sad—15 possible commands that activate not just the brow but the other parts of the face that would naturally move in conjunction with it. In addition, there is a library of 25 phonemes. So, it's possible to get Shrek to mouth out "Stop that!" while simultaneously raising his left brow angrily, turning his head sideways, and flaring his nostrils. Nostril flare too much? No problem. Click, click—lose the nostril flare. Want to see how Princess Fiona's face would look doing exactly the same thing? Simple. Click, click—and there she is: "Stop that!"

In a certain sense, this sort of animation is built on the traditions of marionette puppetry: Pull a string and the arm goes up, pull another and the hand turns in. Only here you're marionetting dozens of facial muscles, one atop the other. More strings—more commands—are being added with each new generation of characters (and in animation, a generation is only a few years); it's easy to see how down the line things could get hopelessly tangled. There could be way too many controls for anyone to be able to drive the characters. And all of this, remember, to approximate the sorts of things our own faces do automatically.

I'm reminded of the story about the Hollywood lighting director, out on the Palisade, watching the sun setting over the ocean—the incredible succession of tones and colors and blushes cast across clouds and palms and breaking waves, till finally darkness swallows it all up. "Amazing," he sighs, at length, "the effects That Guy can get with just one unit."

It's something of that same tenor that inspires the second approach to animation: facial capture. Facial motion capture is a relatively recent elaboration of the more familiar mocap systems that have been used for years to model body movement. In the latter, sensors the size of Ping-Pong balls are attached at key junctions along the limbs and bodies of actors as they perform the gestures that their animated counterparts will be expected to make. The movements of the sensors are tracked and used as guidelines for the digital draftsperson.

Facial capture works basically the same way, as Seth Rosenthal and Steve Sullivan show me at ILM's mocap studio. The animators exactingly spangle the faces of their stand-in actors with painted black dots of varying sizes, at precisely established nodal points. The more dots you add, the subtler the effects you can capture—up to a point, anyway.

*

But the question hovering just beyond the edge of this whole phenomenal exercise – at least in regard to the replication of increasingly lifelike and realistic human faces and characters—is why bother at all ?

Why not just use actors?

"We ask ourselves that question every day," one of the guys at ILM acknowledged, laughing. "Fortunately, it's not our job to answer it."

There are and have been, of course, plausible uses for the technology already. The way the wizards over at Sony Pictures Imageworks inserted head-to-toe synthetic children into the Quidditch scenes in Harry Potter—scenes deemed too dangerous for real stunt kids to attempt. Or, for example, Kevin Bacon's writhing, anatomically correct contortions in Sony's Hollow Man. It's also true that much of the hyperrealistic facial animation technology is being used to create nonhuman characters—such as Shrek or Stuart Little—with ever more humanlike abilities to express emotion.

Longer term, it's easy to imagine the delights of creating nonexistent actors from scratch (not that anybody will save money doing so, not for a long time anyway), or, say, changing the ethnicities of actors who do exist, or even more. Alvy Ray Smith, the now-retired cofounder of Pixar, notes, "In a sense, an actor today is like an animator stuck in his own body. This technology might someday enable a Robert De Niro, for example, to drive somebody else's notional body, to spectacular effect."

*

Such visions, however, raise a further question, and in some senses the very question with which we began: Is such an ambition even conceptually possible? Will anyone ever be able to digitally replicate a human soul?

"Ah," responds Smith, "now you're getting into the question of consciousness itself. I, for one, think we are explainable, and I am unwilling to invoke God or some other vitalistic force to get there. It's a matter of religion with me. Now," he continues, "whether we can get there—'there,' in this case, meaning the creation of an entirely convincing, feature-length live-action film made up of entirely digital actors—I don't know. We may yet encounter some sort of conceptual roadblock along the way."

The great Japanese engineer and roboticist Masahiro Mori (author, among other things, of The Buddha in the Robot) may already have foreseen that roadblock with his notion of the Uncanny Valley. While contemplating the coming evolution of robots, he pointed out the way we can quite readily empathize with a robot that's, say, 20 percent humanlike, and even more so with a robot that's 50 percent, and even more still with a robot that's 90 percent—indeed, you can plot out a rising slope of anthropomorphizing empathy from, say, Mickey Mouse through Shrek. But somewhere beyond 95 percent, Mori hypothesizes, there's a precipitous drop-off into the Uncanny Valley. When a replicant is almost completely human, the slightest variance, the 1 percent that's not quite right, looms up enormously, rendering the entire effect somehow creepy and monstrously alien.

Andy Jones, Final Fantasy animation director, makes a similar point, arguing that, while a completely convincing replication of a human being had never been his team's goal, he, too, had noticed how "It can get eerie. As you push further and further, it begins to get grotesque. You start to feel like you're puppeteering a corpse." Similarly, PDI/DreamWorks's Lucia Modesto noted that her team had to pull back a little on Princess Fiona: She was beginning to look too real, and the effect was getting distinctly unpleasant.

Can that Valley be traversed? Well, even Mori portrays it as a valley, rising sharply back up on the far side as it approaches 100 percent similitude. I started working on this story convinced that it couldn't be crossed (it's a matter of religion with me, too). And yet …

For one thing, there are forces at work burrowing, as it were, from that other side. At one point in our conversation, I half-jokingly suggested to ILM's Rosenthal and Sullivan that if they ever did get around to replicating an actual human actor, most likely that actor would be someone whose visage had already started taking on opaque and exoskeletal characteristics, which is to say someone like Cher or Michael Jackson. "Precisely," they crowed. "Botox is our friend!" The injected biochemical agent removes wrinkles by temporarily freezing the underlying expressive muscles. In all seriousness, they suggested their technology could someday be used to extend the acting careers of people like Cher by artificially injecting into their performances expressions their faces were no longer physically capable of making.

Along the same lines, director and producer Andrew Niccol, who wrote the Truman Show screenplay, is finishing up S1m0ne, in which a down-and-out film director tries to pass off an entirely digital actress as the real thing. Today's technology wasn't good enough to contrive an actual cyberlead, but it was good enough to make the human actress playing Simone look convincingly robotic. "We're simulating a simulation," is how the real-life director, Niccol, characterizes the situation.

Alvy Ray Smith, for his part, continues to believe that a fully digital human character is attainable. "But not in five years," he says. Smith and his Pixar cofounder Ed Catmull had the idea for a completely computer-generated movie in 1974, 12 years before they founded Pixar and 21 years before Toy Story—the first convincing animation of plastic toys. "Toy Story 2 was consuming five hours of computer time per frame, and that's 24 frames per second of screen time," notes Smith. "By my estimation, the computer power needed to crunch the numbers necessary for rendering completely convincing humans is around 2,000 times what we have today, and we're not going to be there for another 20 years. And even then, we'll only be able to get there using human actors—with all their idiosyncratic mannerisms and specificities—as our models."

*

Of all the things I witness during my reporting, the one that most shakes my faith in the Cusan impossibility of fabricating synthetic souls ex nihilo is Hugo, an 18-second short created by the guys at ILM a few years back.

Hugo is an entirely synthetic creation—a phantasm of light and algorithm. A wrinkled figure with Spockian ears, heightened cheekbones, and a sunken chin, he gazes off to the side of the camera, stammering, "Me? What do you mean I'm not real? Oh, I see. This is a joke, right? You must be talking about the other one." He then gulps nervously and gives a forced smile.

I bought it completely. Part of the enchantment has to do with the voice (and voices, all of the artists agree, are essential to the magic, both distracting us from transient imperfections and carrying us along). But mainly it has to do with the story. Turn off the sound, and you immediately notice the too-stiff ears, the way the eye moves when Hugo blinks, the overly rubbery consistency of the skin around the lips, and the lack of detail inside the mouth (the inner lips, tongue, and teeth weren't tracked in the mocap stage). But with the sound on, I was immediately transported into the story. The narrative. A convincing and absorbing tale is precisely what Final Fantasy lacks, and whenever that story gets bogged down, the viewer's mind wanders over to all the ways in which the rendering falls short. The sense of narrative—our tendency to experience everything as story—lies at the very core, ironically, of our own ensouled and incarnate natures.

And anyway, there's always the story about the two Oxford dons out on the commons, lost in disputation over the implications of Zeno's paradox—you know, the one where the arrow gets halfway to its target, then halfway across the remainder of the distance, then halfway across the remaining stretch, and so forth, such that it can never actually reach its goal. So anyway, the two dons—a mathematician and an engineer—are arguing over the implications of Zeno's paradox, and just then a beautiful woman goes sauntering by, and the mathematician, lost in the complexities of the paradox, despairs of ever being able to attain her. But the engineer knows he can get close enough for all practical purposes.

Close Enough for All Practical Purposes.

We long to lose ourselves in narrative—that's who we are. Well-crafted stories transport us, allow us to soar. One day, perhaps, to soar right over the Uncanny Valley and cross the Cusan Divide?

I dunno. But it sure could happen. I, for one, am starting to become a believer.

*

Postscript (2024)

From the Uncanny Valley to the Canny UnHandy

I will leave it to another day (and in the meanwhile to my fellow Cabineteers in the comments section) to weigh in on whether the newest AI mega-data-set bot applications have now in fact bridged the Uncanny Valley (remember, however, that we are talking about film or video, not still photography where it seems to me pretty clear that bots are now generating synthetic likenesses of artificially created “persons”that indeed can fool most of us—a recent piece in the NY Times invited readers to test themselves along these lines, and I will admit to having failed dismally).

In the meantime, though, somewhat surprisingly, even when it comes to still photographs—whether completely synthetic or in faked photo alterations of real people (as, for instance, in a recent case involving Donald Trump supposedly at prayer)—it seems to be turning out that when it comes to bot productions, the biggest challenge may be turning out to come not so much with faces as with hands.

A recent piece over at Buzzfeed offered myriad further examples

and speculated that for starters this may be in part because the mega data sets upon which AI image generators draw feature many more instances of faces than hands. Meanwhile the at least temporary dilemma is generating a whole series of fresh memes, as for example this one from @weirddalle

and extending the rest of us mere humans maybe at least a few more months of lingering exceptionality.

Or so at any rate may we fervently hope and pray, our fingers in a desperate rictus clench of crossedness!

***

ANIMAL MITCHELL

Cartoons by David Stanford, from the Animal Mitchell archive

* * *

OR, IF YOU WOULD PREFER TO MAKE A ONE-TIME DONATION, CLICK HERE.

*

Thank you for giving Wondercabinet some of your reading time! We welcome not only your public comments (button above), but also any feedback you may care to send us directly: weschlerswondercabinet@gmail.com.

Here’s a shortcut to the COMPLETE WONDERCABINET ARCHIVE.